I built an AI research tool to study 9 Substack creators. Here’s what 3,000 notes show.

The most-posted format turns out to be the worst performer. Five data-backed patterns from notes across 9 AI and tech creators.

3,230 Substack notes. Nine creators. Three structured passes with Claude. No spreadsheet, no manual sorting. Classified by attachment type, ranked by engagement, patterns surfaced from the data. The most-posted format turns out to be the worst performer. The highest-liked note in the cohort is 53 words of pure text. Five patterns held across all nine.

Growth gurus have been consistent on one thing: to grow on Substack, you need to work on Notes.

So I started paying real attention. Not just posting more. Actually studying what works. I picked nine creators I genuinely follow, built a tool to pull their notes at scale, and ran the data through Claude in three structured passes. 4,100 items fetched. 3,230 kept after filtering. Found patterns I couldn’t see by scrolling.

A poll I kept running in the past articles got the same question back every time: *How do you actually do research with AI? *

Not the theory. The actual process. This article is one honest answer to that. Pick a question, get Claude access to the data, ask the things in your preferred order. For the niche-mapping version of this, what 1,200 Substack newsletters revealed about the AI and tech space runs the same three-pass method on newsletter-level data.

This article is two services at once: what the notes data shows, and what AI-assisted research actually looks like when you’re doing it for real. This research tool is a lightweight AI agent — it collects data, processes it, and surfaces patterns autonomously.

What’s inside:

How to use an MCP research tool for Substack Notes: 3 structured Claude passes on 3,230 notes, no code

What made two notes go viral at 1,800+ likes each: specific claim + complete value, under 60 words, 80x outperformance

5 engagement patterns across all 9 creators: pure text wins, post-link notes underperform, 4x spread explained

5 Substack note formats that consistently outperform: Tactical Revelation, Named Behavior, Worldview Statement, Milestone Done Right, Post Link Done Right — with real examples

How to analyze your own Substack Notes performance with AI: the 3-pass manual method (20 minutes, free), plus how to run the same analysis across 500 notes and multiple creators with the MCP tool

This is part of a series I’m building on how I use AI for research, not just building apps. Different questions, different data sources, same approach. More coming.

Hi, I’m Jenny 👋

I build AI systems and tools, then share how I did it. I run the Practical AI Builder program — for people who already use AI and want to build real things with it. Check it out if that sounds like you.

If you’re new to Build to Launch, welcome! Here’s what you might enjoy:

- How to grow Substack from zero in 2026

- The viral notes system

- How to Do Research With AI Effectively

How I Built an MCP Research Tool for Substack Notes

The tool is a custom MCP.

Quick context if you’re new to these: an MCP (Model Context Protocol server) is what lets Claude connect directly to an external system, without you copy-pasting, tab-switching, or manually pulling data. You build it once, and Claude can read from it any time you ask.

I use them for everything. One connects Claude to my Google Drive. One to Notion. One to my local dev environment, my database, Gmail.

Each one is a direct line from Claude to something I actually work with, so I can think and build without stopping to go get information.

→ New to MCPs? Start here: MCP Explained: How I Turned My Second Brain into Connected Intelligence · Best MCP Servers for Claude Code

This time I pointed it at Substack. Not to post. I already have tools for that. But to study. Nine creators I genuinely follow:

Wyndo — one of the first accounts that made me think Notes could be more than announcements. Highest average engagement in this entire cohort.

Mia Kiraki 🎭 — came from theatre, took it to AI. Generates more comments per note than almost anyone else in this study.

Dr Sam Illingworth — the most consistent writer here by some distance. 499 own notes in the sample, 33.4 average likes per note.

Daria Cupareanu — one of my close contacts since day one. Has a wavelength that flips how you think about AI before you notice it happened.

Anfernee — highest posting volume of anyone I studied. By a lot. The contrast case this analysis needed.

Karo (Product with Attitude) — one of the most generous community builders I’ve seen on this platform. Her community notes are something to study.

Karen Spinner — one of my favorite builders on Substack. Solopreneur coder without a CS degree, writing from the middle of the build.

Code Like A Girl — a publication rather than a solo creator. Spotlights women in tech and does it loudly. I added this one because the format is genuinely different from everyone else.

Me — Build to Launch. I ran this on myself too. Turned out to be more interesting than I expected.

And the tenth: AI Meets Girlboss. Substack returned a 404 every time I tried to retrieve her notes. I blame Substack.

You give it a Substack newsletter URL. It returns up to 500 of that creator’s recent notes. Each one comes back like this:

Author: @miakiraki

Body: "Before working on anything super important, I run this first:..."

Date: 2026-03-09

Likes: 42 | Comments: 5 | Restacks: 0

Attachment: none (pure text)

Author: @miakiraki

Body: "Before working on anything super important, I run this first:..."

Date: 2026-03-09

Likes: 42 | Comments: 5 | Restacks: 0

Attachment: none (pure text)

Body text. Exact metrics. Attachment type. You can’t get that from scrolling someone’s feed. I needed numbers, not impressions.

4,100 total items fetched. About 20% of that is noise: social graph bleed, silent restacks, other people’s notes bleeding in. Leave those in and your averages are wrong. I filtered by author handle for every creator before touching the numbers. 3,230 clean notes. Nine people. Same-ish niche. Same time period. Very different results.

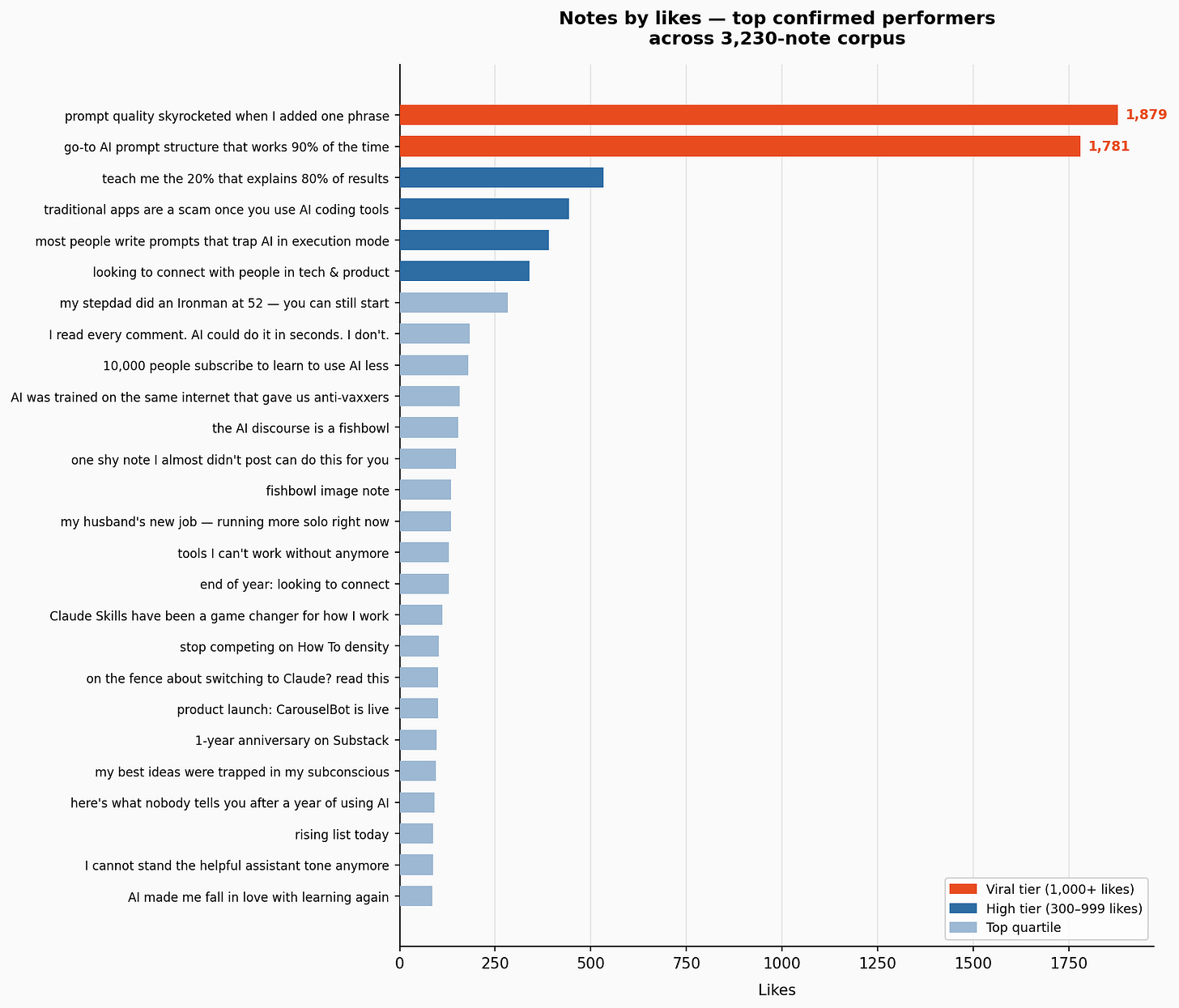

Across the notes, nine roles kept showing up across the creators. I’ll define them here, then reference back to how they perform:

High-volume motivational — maximum output, short punchy notes, motivational tone. Quantity dominates.

Daily infrastructure — shows up like clockwork. Consistency is the strategy. Rarely misses.

Worldview repetition — posts the same core beliefs, framed differently each time. Consistent identity, not topic diversity.

Theatrical AI builder — high-frequency posting with a distinct persona. Volume and voice working together.

Pattern revealer — surfaces hidden structures in how things work. Notes that make you think “I’ve never seen it put that way.”

Reusable templates — notes structured as prompts, frameworks, or formulas you can copy directly.

Curiosity curator — shares what they’re learning and noticing. The note is the thinking, not a conclusion.

Community connector — spends more energy amplifying others than publishing themselves. Connector identity.

Community publication — not a solo creator. A brand voice posting on behalf of a community.

From there, the analysis was a conversation. I didn’t write code to process the data. I asked Claude to do it, in the same session where the notes were already loaded.

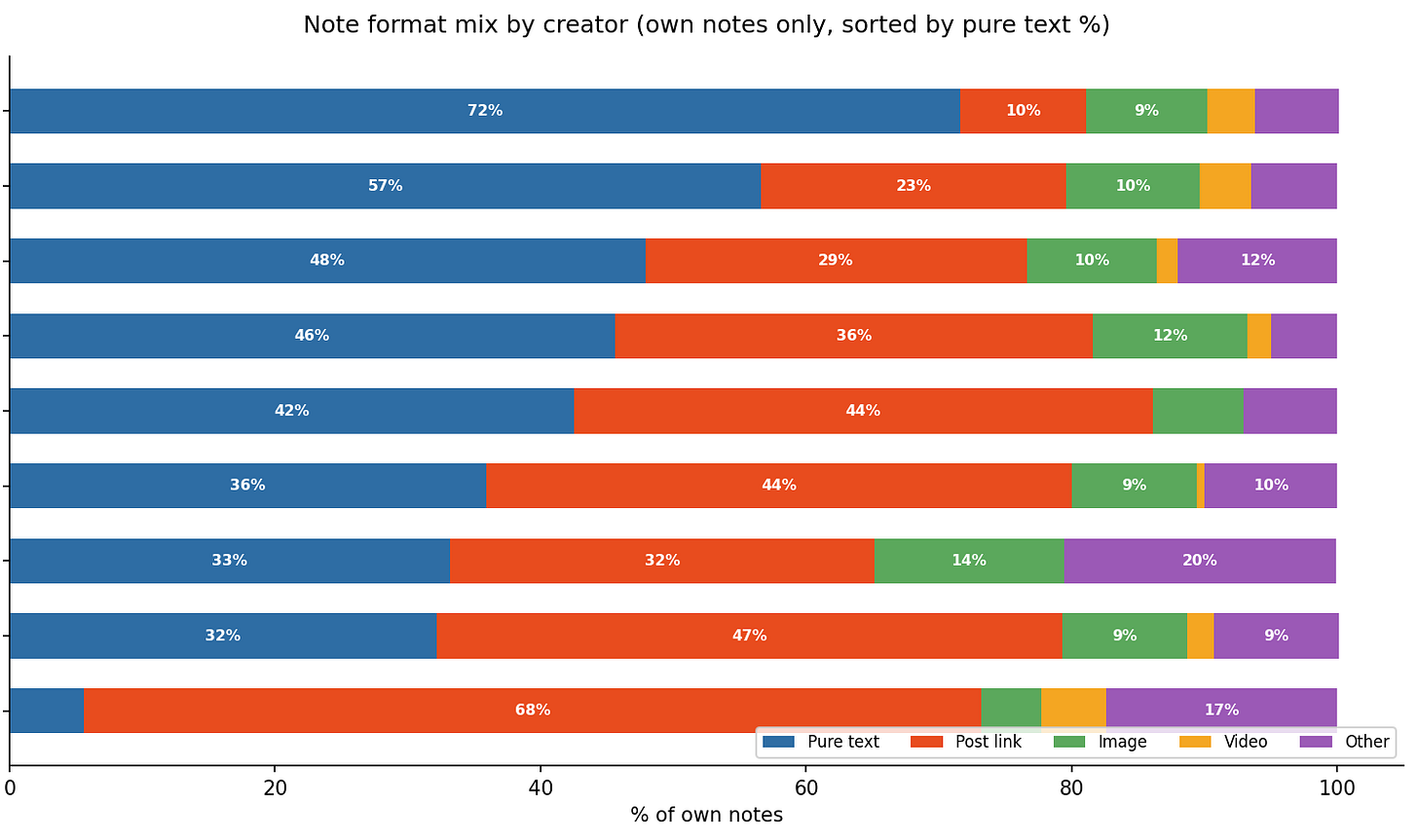

First pass: classify every note by attachment type across all nine creators. Pure text, post link, image, comment/reply. Sort each creator’s notes by likes. Give me the top ten per creator.

Second pass: what do the top-performing notes have in common structurally? Not topic. Structure. What is the opening line doing? Is the value inside the note or behind a click? Does it teach, or does it name something?

Third pass: what patterns hold across all nine, not just one creator? Where does the data contradict the conventional advice?

That last question is where the patterns in this article came from.

Three conversation passes, structured prompts, no manual spreadsheet. The MCP handled the retrieval. Claude handled the synthesis.

My job was asking the questions in the right order.

If you want to know how to use this MCP, I wrote about the installation and how to use it here.

What Made These Two Notes Go Viral? (1,800+ Likes Each)

When you sort by likes, most notes in this cohort land somewhere between 5 and 50. There are two exceptions.

@wyndo — “My prompt quality skyrocketed when I added one phrase...” — 1,891 likes, 167 restacks, pure text

@jennyouyang — “My go-to AI prompt structure that works 90% of the time...” — 1,781 likes, 194 restacks, post + text

The next highest in the entire cohort: 339 likes. These two are roughly 5x that. Nearly 80x the cohort average.

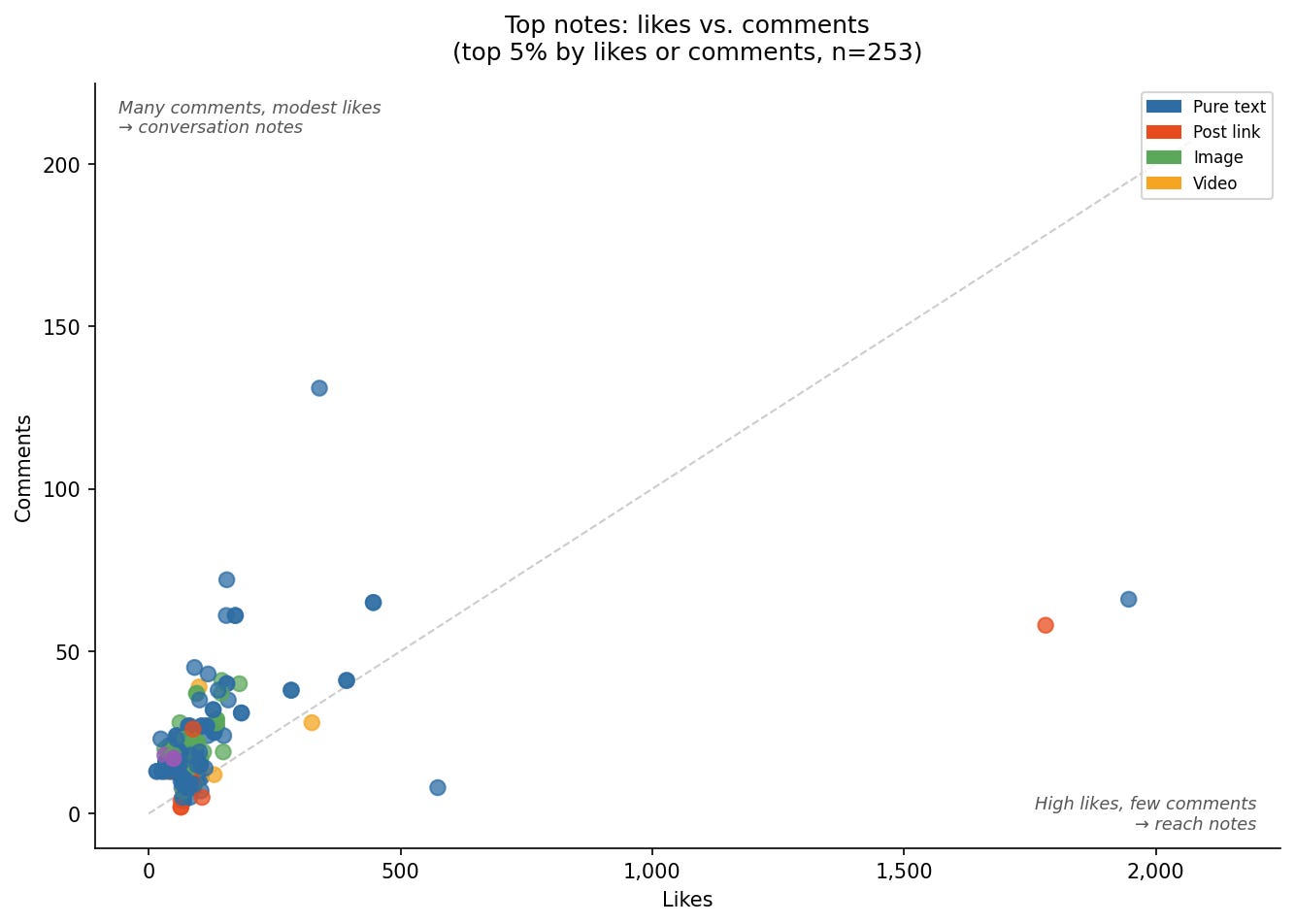

Top confirmed notes by likes across the 3,230-note corpus. Two notes break away from the rest by an order of magnitude.

What they have in common: **both open with something specific. **

Not “here’s a useful tip.” “Works 90% of the time” is a rate. “Skyrocketed” implies a result. And both deliver the actual thing: a prompt, a phrase, a structure. Right there in the note. No click required. Both are under 60 words.

The top note opens with “skyrocketed,” then delivers a single phrase you can copy straight into your next prompt. It ends with a reframe: AI as a thinking partner, not an answer machine. 53 words. No image, no link. 1,891 likes.

Mine at 1,781 likes followed the same structure.

That same pattern holds one level down. Wyndo’s second-best note (541 likes) opens with a specific learning method and delivers the exact prompt sequence in the note body, no link needed.

Four of the five top notes across 3,230 are built this way.

I started calling it the tactical revelation format. Specific claim. Immediately usable thing in the body. Complete without any click. Short enough to remember. That’s it.

If you want the full story of what happened after that note took off. I wrote about it here.

What 3,000 Substack Notes Reveal About Engagement Patterns

Before the patterns: a note on what the averages are actually measuring.

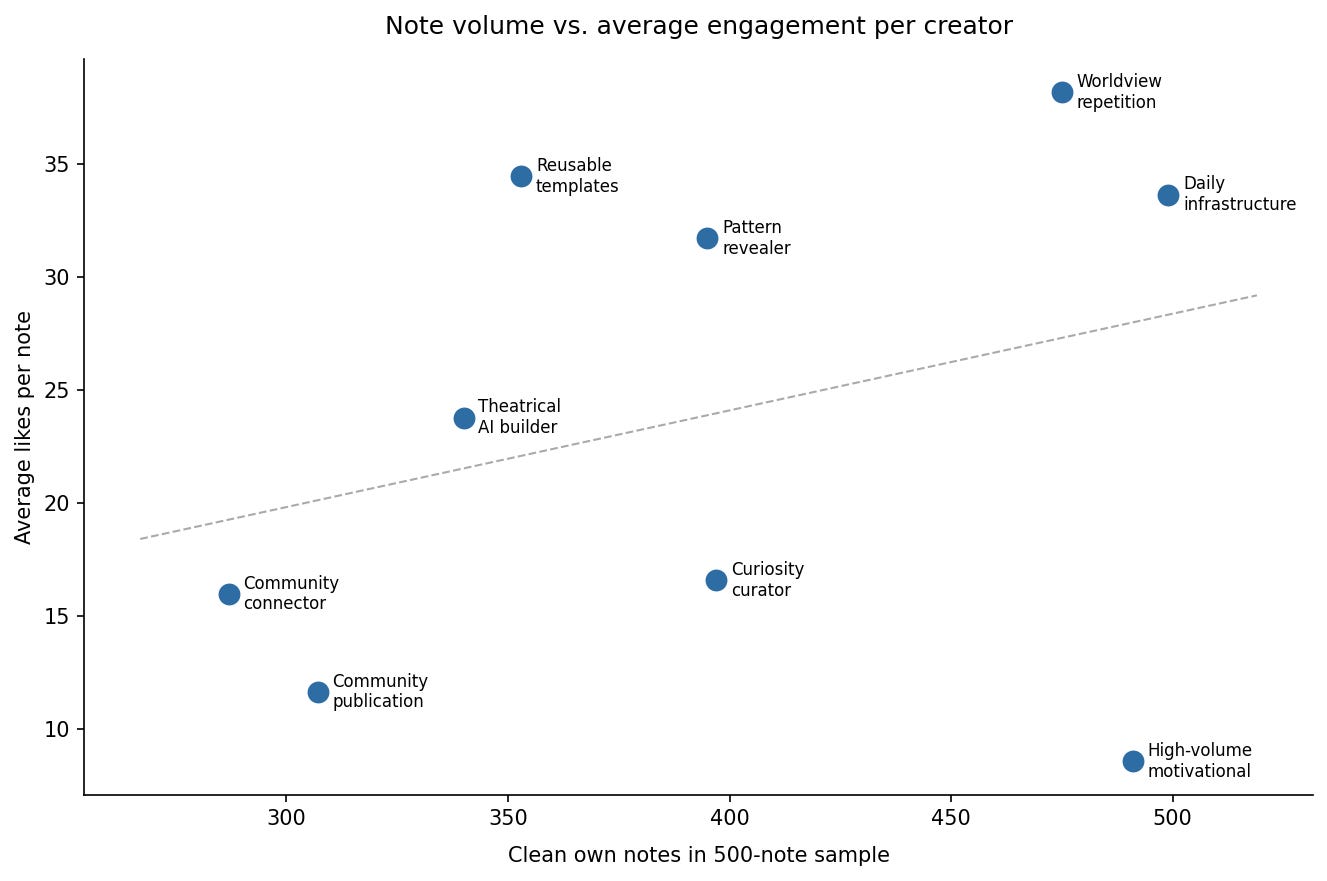

Average likes per note is a useful signal, but it flattens something important. A creator posting 499 notes at 33 average likes has generated over 16,000 total likes in this sample window. That’s a different compounding trajectory than someone posting 50 notes at higher individual numbers. Volume at consistent quality doesn’t just add. It multiplies.

Not one creator, not one good week. These held across all nine.

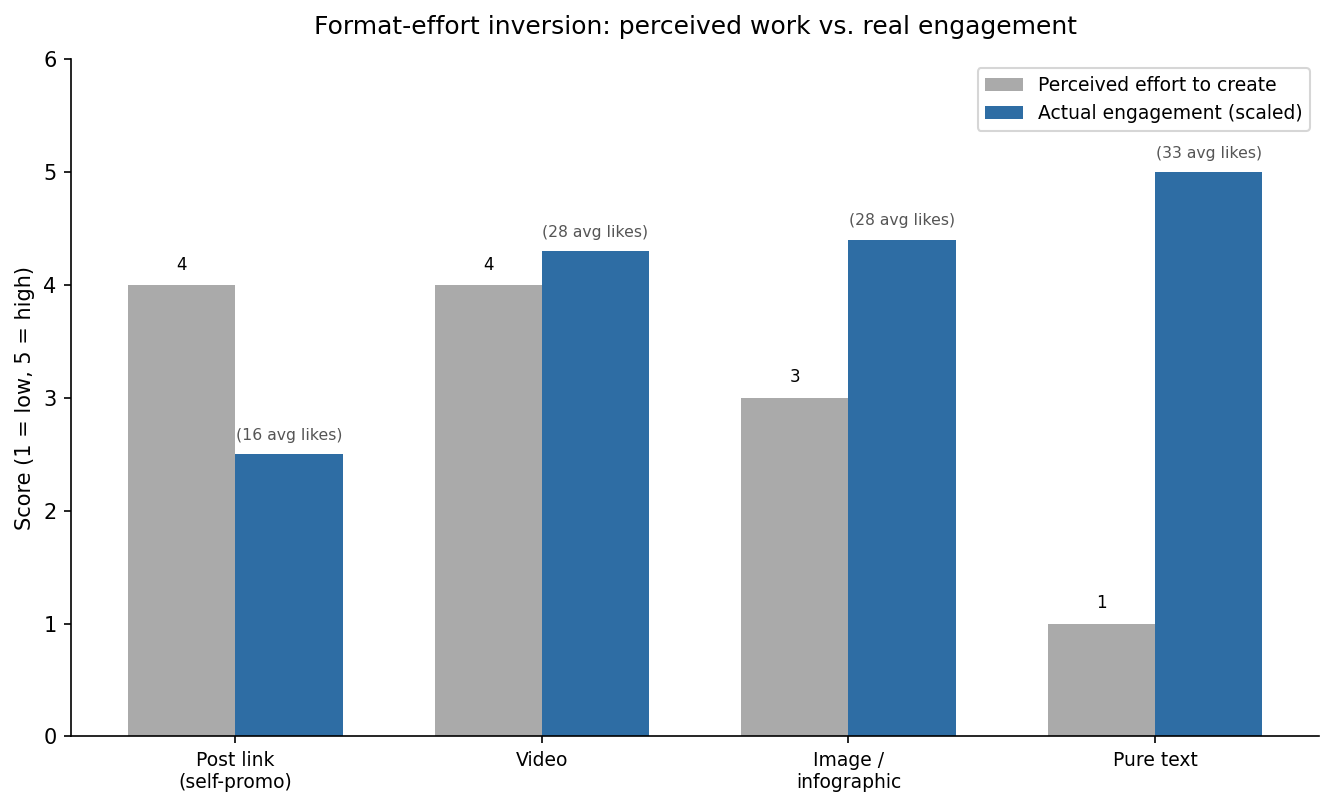

Why Pure Text Notes Get More Likes Than Images or Links

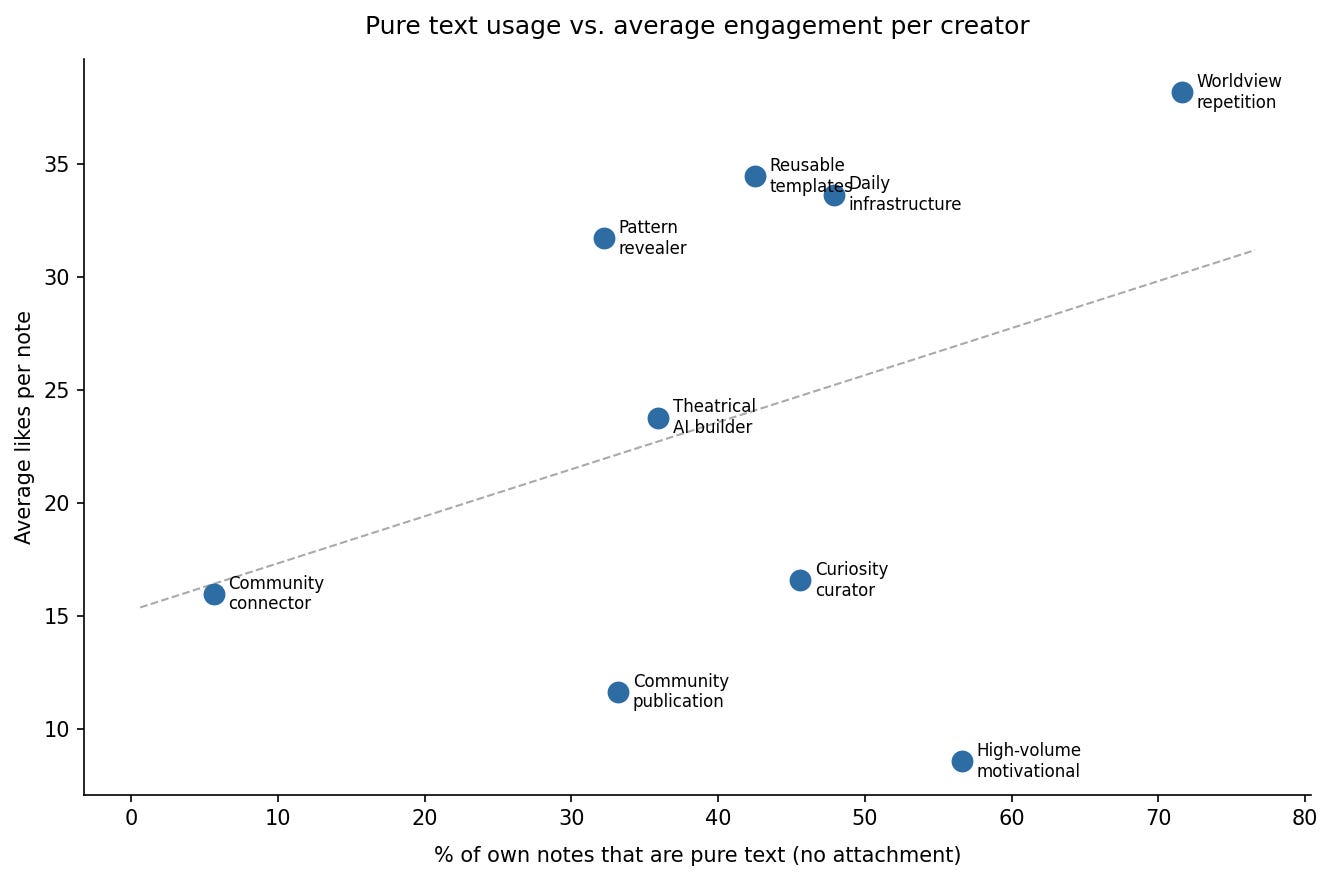

The top two by per-note engagement (one at 37.9 average likes, one at 33.4) both post predominantly pure text, above 70%. When I sorted by attachment type across the full corpus, pure text came out ahead every time.

The catch: pure text isn’t the mechanism on its own. Anfernee is the interesting exception: 57% pure text, 8.6 average likes. But most of his audience lives outside Substack. Notes engagement rewards creators whose readers are already on the platform, and that context matters. Strip that out, and the pattern holds: the variable isn’t the format. It’s whether the notes consistently come from the same point of view.

Pure text usage vs. average engagement across 9 creators.

Why Post-Link Notes Underperform (And the Exception That Works)

Post-link notes consistently underperform standalone notes. Karen’s feed is 68% post-link, and most of those are promoting others’ work, not her own. Generous, but low engagement by nature. The comments and likes concentrate on her original notes, the ones where she’s actually saying something herself.

The exception: post-link notes where the body is complete on its own, where the link is optional, not required to understand what was said. Those perform close to standalone levels. The test: if your note doesn’t make sense without clicking, it’s not a note. It’s a promo.

What Drives Comments vs. Likes on Substack Notes (They Measure Different Things)

Mia’s most-liked note (154 likes) makes a useful observation about AI writing. Her most-commented note (72 comments, the highest in this entire cohort) is this:

And then I got annoyed that I can’t just WRITE NORMALLY anymore. That was a completely fine sentence structure before bots ruined it for everyone.

No useful information. 72 comments. The notes that generate conversation are the ones that name something people do but don’t admit. Not the ones that teach. Decide which you’re optimizing for before you start. They pull in different directions.

Likes and comments are not measuring the same thing. Two distinct clusters emerge across note types.

How Worldview Consistency Drives Substack Notes Performance (Not Volume)

Three creators in this sample each posted between 475 and 499 own notes. Nearly identical output rates. Per-note averages across the three: 8.7, 33.5, 37.7. That’s a four-to-one gap from the same volume.

Note volume vs. average engagement across 9 creators. Posting more notes does not produce more likes per note.

One of the more revealing patterns: the creator with the lowest per-note average has a clear split in their own data. Their highest-performing note (”Your business should serve your life. Not consume it.”) has nothing to do with AI. Their purely motivational notes consistently outperform their AI content. The data is telling you what the audience actually followed them for. The feed just hasn’t caught up yet.

The difference isn’t how much they post. It’s whether note 200 sounds like it came from the same person as note 1. Code Like A Girl shows what this looks like when the constraint doesn’t apply. It’s a multi-author publication with a rotating set of voices. Per-note engagement is lower, but comparing it to solo creators would be like comparing a magazine to a column. Different purpose, different metric.

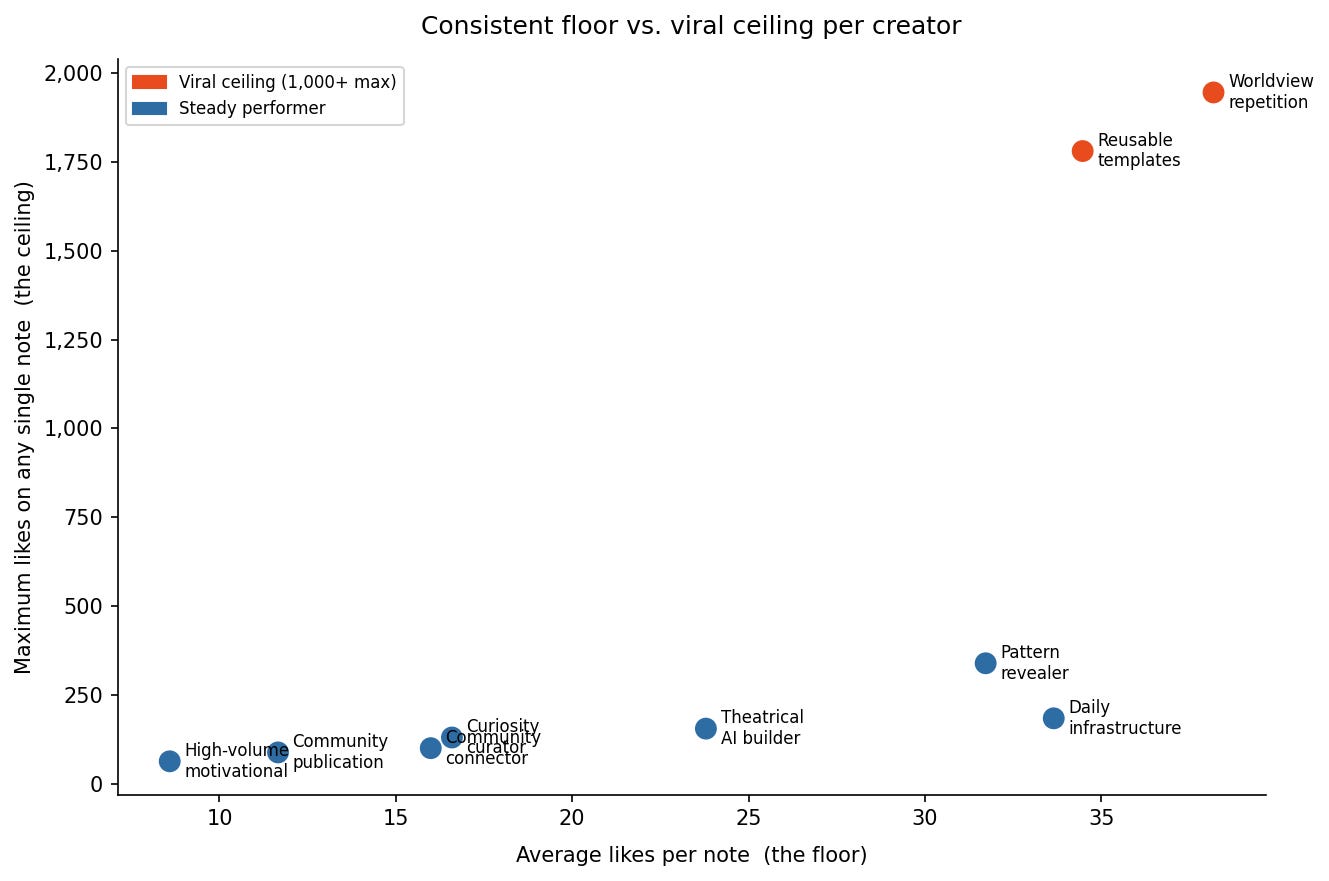

Going Viral vs. Building Consistent Voice: What the Data Shows

Going viral raises your floor once: more followers, a higher baseline for the next note. The two outlier notes in this cohort did that. But it’s a single event. A 33-average across 499 notes isn’t a single event. It’s 499 notes of the same point of view, compounding.

Average likes (the floor) vs. maximum likes (the ceiling) per creator. Two distinct strategies, both producing results.

The difference between a creator who spikes and levels off versus one who builds steadily for months isn’t talent or topic. It’s accumulated recognition. Readers identify the voice before they finish the opening line. That’s what brings them back.

Karen’s highest-performing note didn’t teach anything. It tagged 20 people by name: everyone who’d encouraged her to finally write a particular article. No useful information. Just a moment that made 20 specific people feel seen. It matched her best informational note on engagement. The audience wasn’t there for information. They were there for her.

That’s what matters.

Five Substack Note Formats That Consistently Outperform

The five patterns above are observations about the data. These five formats are what they look like in practice: what you actually write when you want to apply them.

The formats that look like the most effort perform the worst. The ones that look effortless win consistently

The Tactical Revelation

Opens with a specific credibility claim. Delivers something immediately usable in the note body: a prompt, a phrase, a method, a template. Complete without any link.

What kills it: vagueness (”here’s something that helped me”) without showing the thing, or making the reader click to get it. The reveal has to be in the note. The moment the value lives behind a link, the note becomes an ad.

Daria Cupareanu takes this furthest: she pastes the actual prompt text inside the note. Not what the prompt does, not a screenshot — the full thing, ready to copy. The note becomes the artifact. People save it rather than liking it and moving on.

The Named Behavior

Names something people do but don’t admit out loud. First person. No advice or fix at the end. Just the recognition.

What kills it: adding a solution. The moment you give a fix, the note shifts from “I see you” to “here’s what to do,” and the comments typically drop by half. Mia’s 72-comment note ends on the behavior itself: the annoyance, the irony, nothing resolved. That’s why it hit.

The Worldview Statement

A clear take, stated plainly. Not a tip. Not a thread. Just what you actually think, with no hedge.

What kills it: qualifiers. “I think,” “in my experience,” “this might just be me.” Each one softens the signal that there’s a person here with actual opinions.

Sam’s highest note has nothing to do with AI. It’s about reading every comment by hand: “AI could summarise them in seconds. But the point of reading them is not efficiency. It is respect.” No tactics. No framework. Just a position. That’s the format working at full power.

The same format, five completely different worldviews:

“AI won’t make you smarter if you’re just using it to skip thinking. It’ll make you faster at being shallow.”

“AI makes everything smoother. Critical AI literacy is knowing which friction to keep.”

“Regulators are using AI to monitor AI companies. This is not oversight. It is ventriloquism.”

“Don’t ask AI for ideas. You’ll get the same ten everyone else gets.”

“Prompt engineering has a blind spot: it assumes the goal is to need fewer conversations. Treating prompting and conversation as the same activity is why a lot of AI-assisted work is technically proficient and spiritually hollow.”

Five different people. Five different takes. Same structure: the first sentence earns the reader, the second turns it. The worldview is theirs. The format is portable.

The Milestone Done Right

A personal update that includes something transferable: not just the milestone, but a specific detail the reader can take with them.

What kills it: pure announcement. “I hit 1,000 subscribers” alone is not a note. “I hit 1,000 subscribers, and the note that pushed me over was one I almost didn’t post because I thought it was too obvious.” That’s a note. The personal moment earns attention; the specific detail gives readers something beyond congratulations. Karo Zieminski does this with connection requests: “I’m looking to connect with builders doing X.” Personal enough to feel real, specific enough to be useful to anyone who fits.

The Post Link Done Right (vs. Wrong)

Done right: the note body is complete. The linked article is for readers who want to go deeper. You get the value either way.

Done wrong: the note exists to redirect. The body is a wrapper for the link, not a thing on its own. This is the most common pattern in the cohort and the lowest-performing one by consistent margin.

Code Like A Girl is the only creator in this cohort with zero self-promotional post links. Every article they share in Notes is someone else’s work. That’s not a content strategy. It’s a community strategy. They’re not using Notes to drive traffic. They’re using it to spotlight other people. That’s a different goal, and it earns a different kind of loyalty.

Before you take any of this and run with it. One thing to be honest about.

What This Study Can’t Tell You (And Why You Should Run Your Own)

Everything here comes from the AI and tech creator space.

Nine people posting about AI tools, workflows, building things. My world. The patterns probably transfer, but I can’t promise they do for a food writer or a finance creator.

I also only studied people I actually follow.

That was a choice. I could point this tool at anyone. This data is all public. But publishing detailed breakdowns of people I’ve never interacted with didn’t feel right to me. So I didn’t.

Which is also why the most useful version of this research is the one you run on your own niche. What works in my corner of Substack might not be what works in yours. The only way to find out is to look.

This article is one honest answer to the AI research process question: how to actually point AI at a dataset, surface patterns, and synthesize them.

I use this same approach for more than just Notes. For understanding markets, studying competitors, finding gaps in a niche, making sense of feedback. AI isn’t only useful for building apps. It’s how I do research now, full stop.

You don’t need to wait for the next one. Run your own version of this right now, in your own niche.

How to Analyze Your Own Substack Notes Performance With AI

Same three passes. Run them on yourself. The research prompts I used are similar to these Claude Code research prompts — structured queries for classification, ranking, and pattern detection.

Step 1: Find Your Substack Format-Engagement Gap

This is the single most useful thing you can surface about your own notes. Take your last 30. Classify each one:

Pure text — no attachment

Post link — you linked somewhere (your own article or someone else’s)

Image — standalone visual

Comment/reply — you responded to something

Calculate per format: how many did you post, and what’s the average likes.

Say 12 of your last 30 notes link to an article, and those average 10 likes, but your plain text notes average 20. That means 40% of everything you post is getting half the results. The fix isn’t posting more. It’s posting fewer post links and replacing them with notes that stand on their own.

Step 2: Analyze the Structure of Your Top Notes

Pull your five best-performing notes. For each one:

Did the opening line make a specific claim, or did it stay vague? (”Works 90% of the time” vs. “something I found helpful”)

Was the value in the note itself, or did the reader need to click to get it?

Did it name something your reader does, thinks, or feels? Or did it tell them what to do?

Would someone who’s never heard of you still find this useful?

What single idea is it about? If you need more than one sentence to answer, the note might be trying to do too much.

Your top notes will answer those five questions the same way, most of the time. That consistency is your actual content strategy, whatever you thought it was before.

Step 3: Find What the Data Contradicts About Your Content

Take everything you found in Pass 1 and 2. Now ask the harder question: what does the data show that doesn’t match what you thought you were doing?

Ask Claude this:

“Here are my last [X] notes, classified by type and sorted by likes. What patterns hold across all of them — not just the top performers? Where does the data contradict what I might have assumed about my own content? What am I posting most that’s performing least? What am I posting least that’s performing best?”

That’s where the useful stuff usually is. Not the confirmation. The contradiction.

That’s the framework: manual, free, about 20 minutes.

For it to run across 500 notes and multiple creators at once, here’s the faster path.

How to Run Substack Notes Analysis at Scale

If you want to do this properly at a larger scale, there is a setup layer this article can’t fully cover. You need the right connection, prompts that hold up, and a way to steer AI when the first output is too shallow, too broad, or just wrong.

If you want help doing that with your own niche, that’s what I walk paid members through in office hours. We set up the connection, test prompts, and adjust the workflow together. Then we pick your cohort, run the analysis, and see what the data actually says. You leave with a version you can reuse on your own.

→ Join the future practical AI builder program sessions.

I also packaged the workflow as a Claude plugin with fetch script, attachment classifier, pattern prompts, synthesis template.

→ Download the Substack Research Plugin here

If you need more related resources, find it on the AI Builder Resources page

I’m documenting more of these. Different questions, different tools, different domains. If that’s the direction you’re interested in, stay close.

Same research method I used to validate my app idea in 70 minutes, test data sources before building.

I built this into a full tool — Build a Substack Niche Analyzer With Python and Cursor documents the complete build.

For the newsletter-level landscape before going deep on individual creators — what 1,200 Substack newsletters reveal about AI and tech is the companion study.

If this was useful, pass it to one person who’s been trying to figure out what to actually post on Notes. That’s the reader this was written for.

Want to build your own research tool? Start with 15 Claude Code project ideas — from beginner to advanced systems.

Which of these five note types have you been missing?

— Jenny

Great analysis, Jenny!

Thanks for breaking it all down together.

A few viral notes can be game-changing sometimes, but what matters most is being consistent in delivering them.

In my case, pure text works incredibly well! I’ve tried a bunch of formats—from images to video—but none have worked as well as pure text.

It’s also important to respect people’s time, so I try to stay away from long-form notes. They sometimes work for personal announcements, but they rarely work for daily engagement.

Excellent Analysis Jenny, thanks for putting in this effort and providng us the learning behind it. Would love to experiment with these suggestions and see how it goes for me.